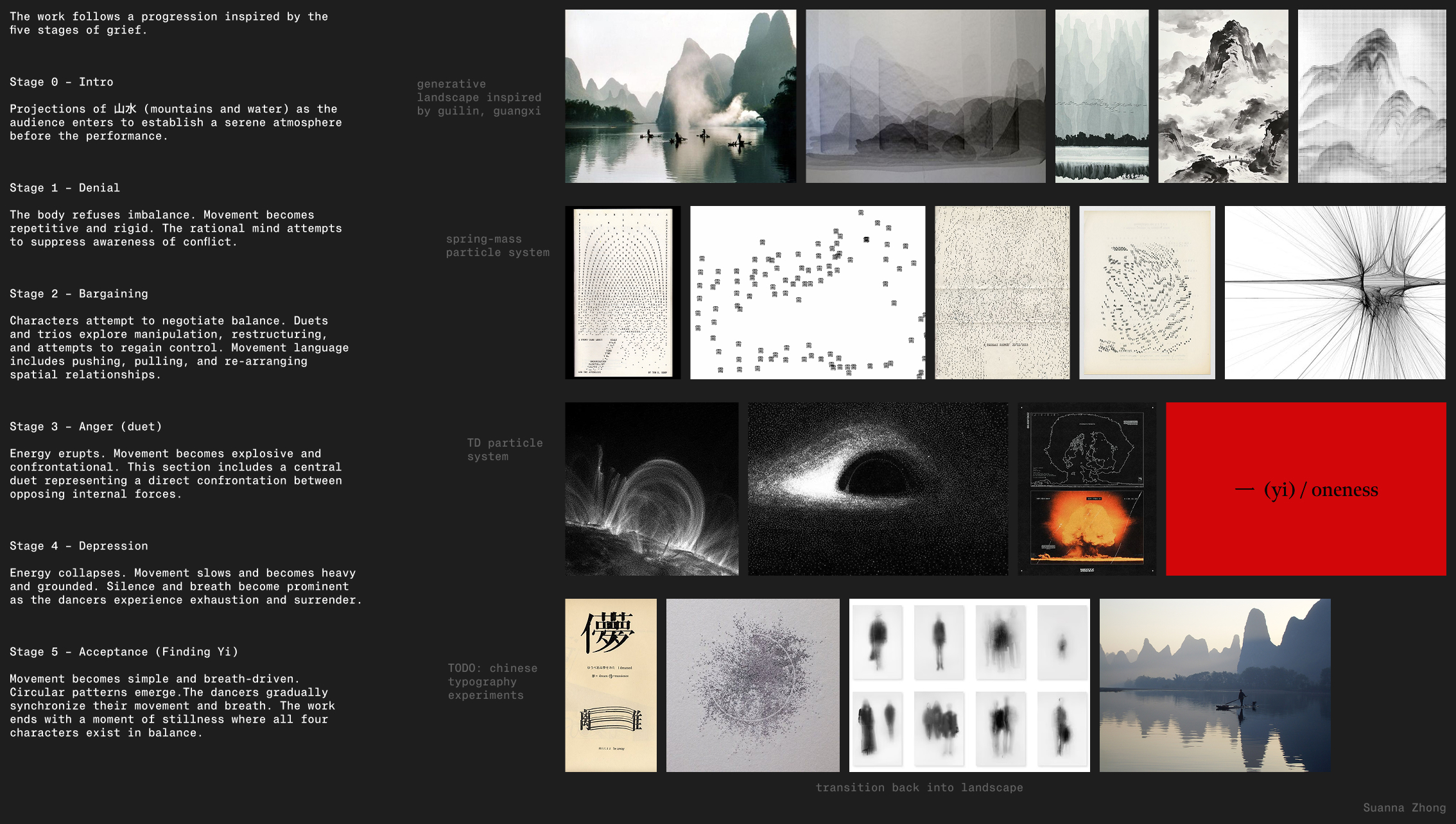

一 (yi) / oneness

TouchDesigner, p5.js, ml5.js, Google MediaPipe, OSC, WebSockets, Node.js

↗ GitHub ↗ Full Performance

For Ceci Sun's senior thesis, 一 (yi) / oneness, I was the sole designer of the real-time interactive visuals accompanying her live dance performance.

Background

In Golan Levin's Fall 2023 Creative Coding course, I used p5.js to create a gesture expander that rendered in real-time. The project used the

via the MIT ML5 Bodypose Keypoints library. Ceci Sun, a friend and

dancer at Johns Hopkins University, performed choreography to "Motion

Picture Soundtrack" by Radiohead.

When Ceci and I caught up in Winter 2025, she asked if I would be interested

in creating a new version of the project for her senior thesis capstone performance.

Given that it had been two years since we last collaborated and that newer

technologies had become available, I was really excited to create an improved

version.

Thematic Underpinnings

Much of Ceci's creative practice is informed by mind-body connections through qigong principles. Her work combines Eastern and Western philosophical perspectives: Eastern traditions emphasize balance and the flow of energy, while Western contemporary dance practices explore emotional expression and psychological experience. As an American-born Chinese American, I have also grown up with a mix of Eastern and Western philosophies. Topics such as meditation and traditional Chinese medicine are deeply ingrained in my personal life, which I was able to draw on when working on this project.

Inspiration

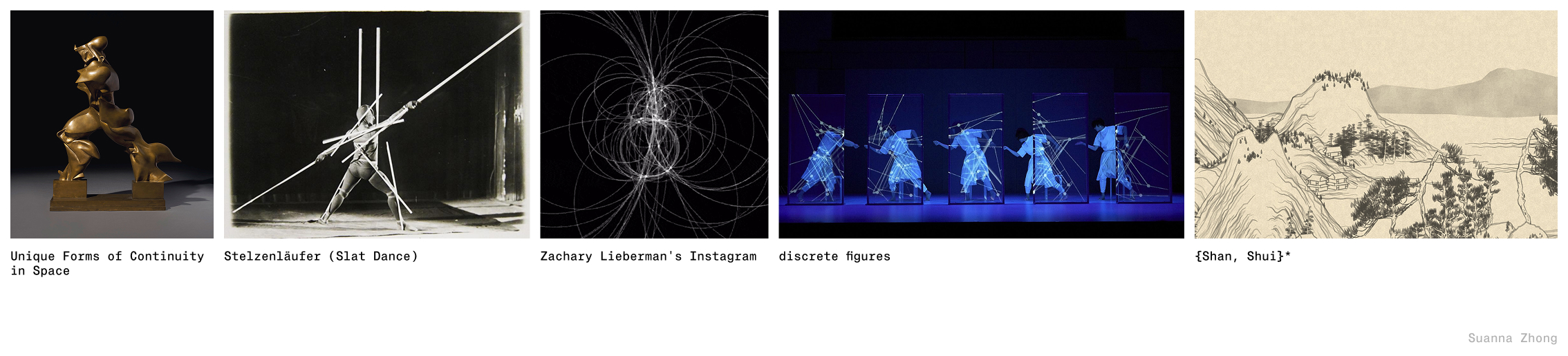

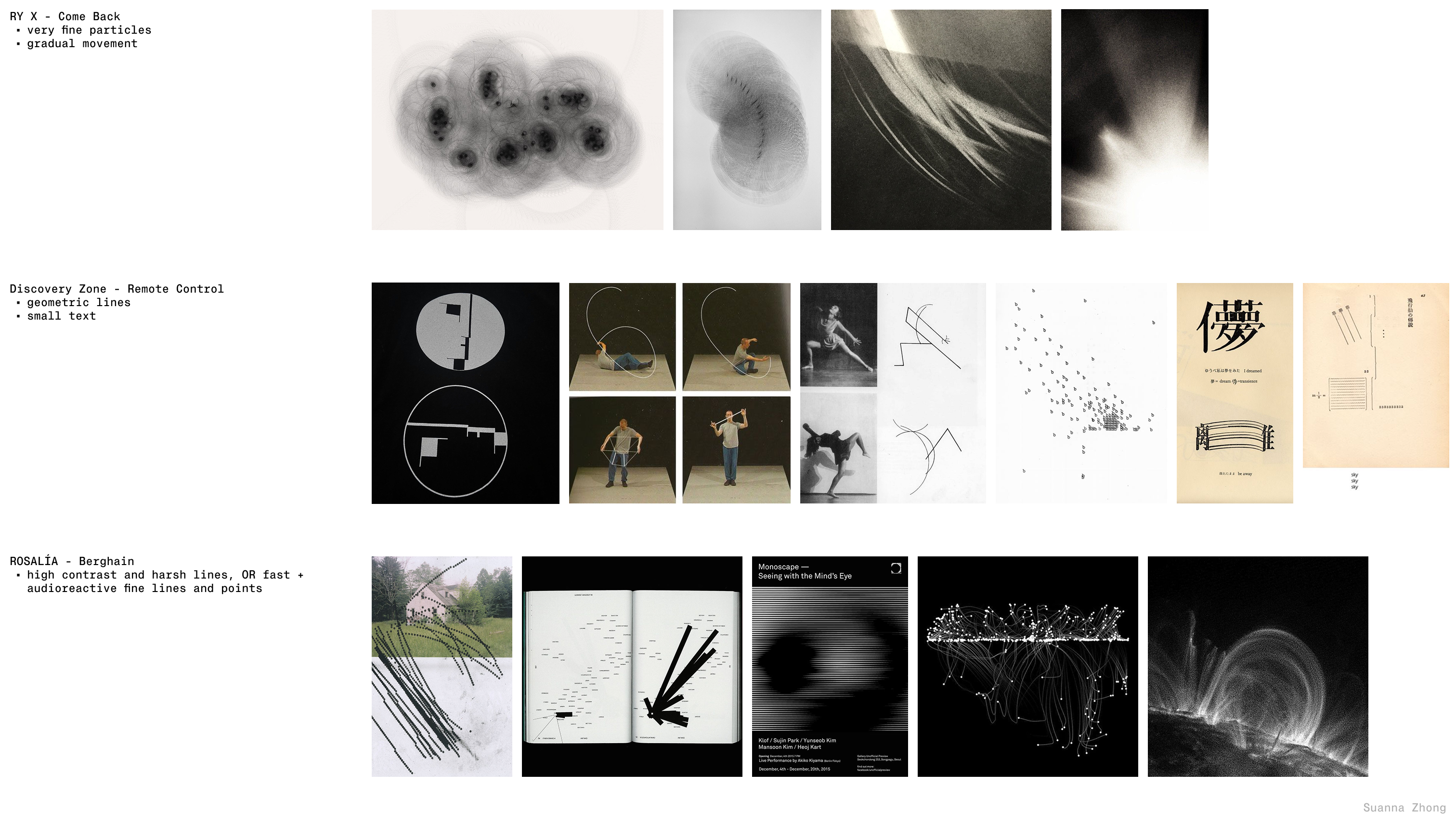

Previous works that create extensions of the human body include Umberto Boccioni's Unique Forms of Continuity in Space (1913), Oskar Schlemmer's Stelzenläufer (Slat Dance) (1927), and Zachary Lieberman's daily sketches. I was heavily inspired by discrete figures by Daito Manabe's Rhizomatiks Research group, as well as Lingdong Huang's {Shan, Shui}*. For time-based visuals, I often find it easier to figure out the music first, so Ceci sent me some placeholder tracks that helped guide what the visuals should look like.

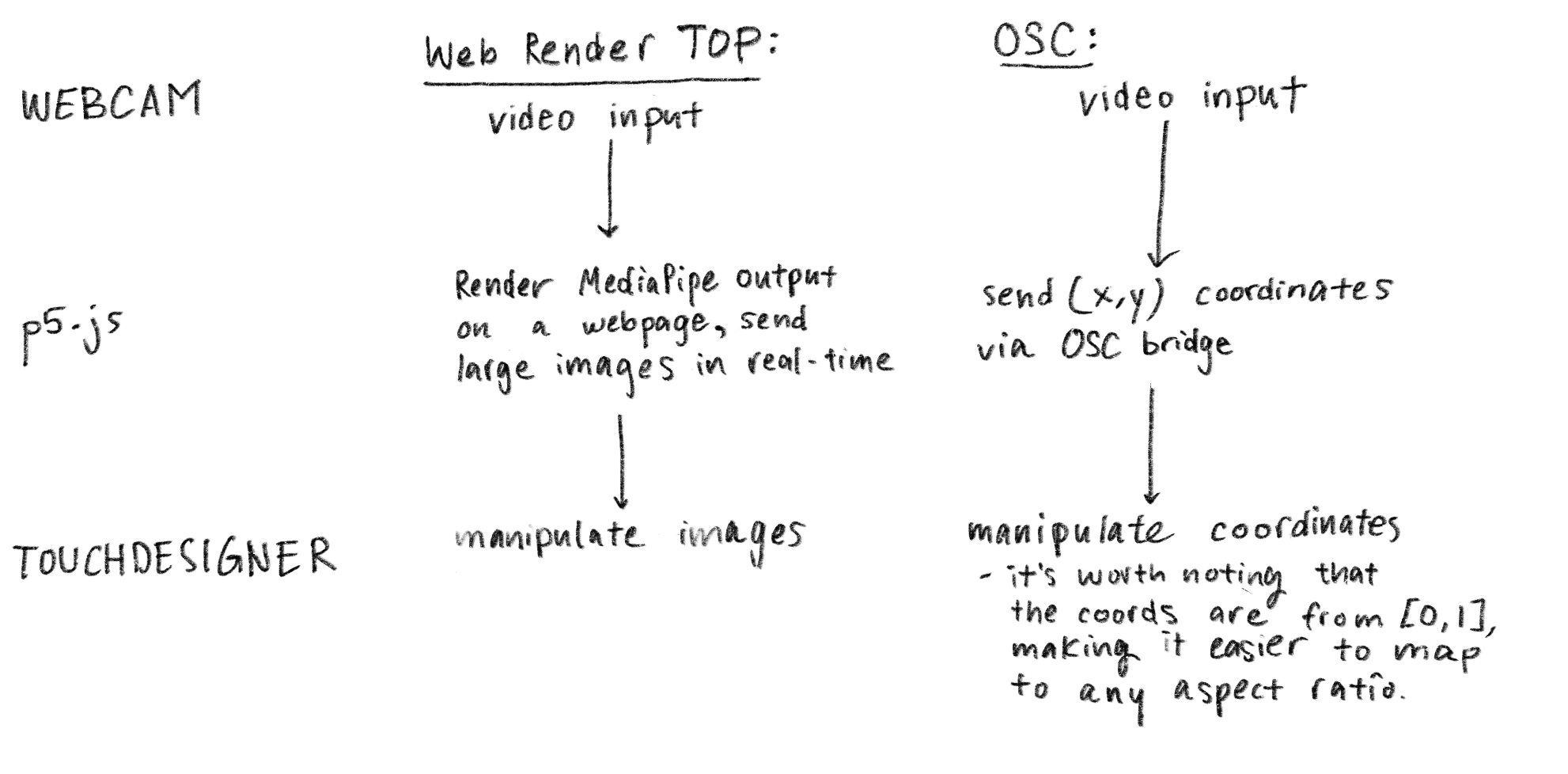

Web Render vs. OSC

I had two options for connecting MediaPipe to TouchDesigner. TouchDesigner's Web Render node can display a webpage as a texture, which would show the MediaPipe visualization directly. With this approach, I would receive an image as an input.

The alternative was using OSC to send the numerical pose data, which I ended up using because it had a lower latency. Also, the programming workflow of using variables felt more intuitive to me, as opposed to encoding and decoding an image. Additionally, I could create effects using placeholder values in TouchDesigner and then replace them with the OSC values later.

When it came to deciding what equipment to use for the live performance, it turned out that using the OSC approach would be more reliable. This is because images are very expensive to send. Depending on the size of the venue, using longer cables may introduce some undefined behavior as well. Overall, simplifying the number of dependencies and hardware allowed us to focus on the creative process and the performance itself.

MediaPipe is really powerful because it can retreive a lot of information super quickly. The framework provided three parameters for all 33 joints and deciding which ones to use so that the mapping would look intuitive to the audience was an interesting challenge. When connecting the data to TouchDesigner, I observed that the all of the data had a natural jitter, so it turned out that only a few parameters were enough to communicate the overall movement of the choreography.

I decided to use the distance between two fixed points to drive the animation. For example, the distance between the left hand and the left shoulder is easy for the dancer to control. The slider in the p5 GUI would control how sensitive the mapping was.

Stage Setup

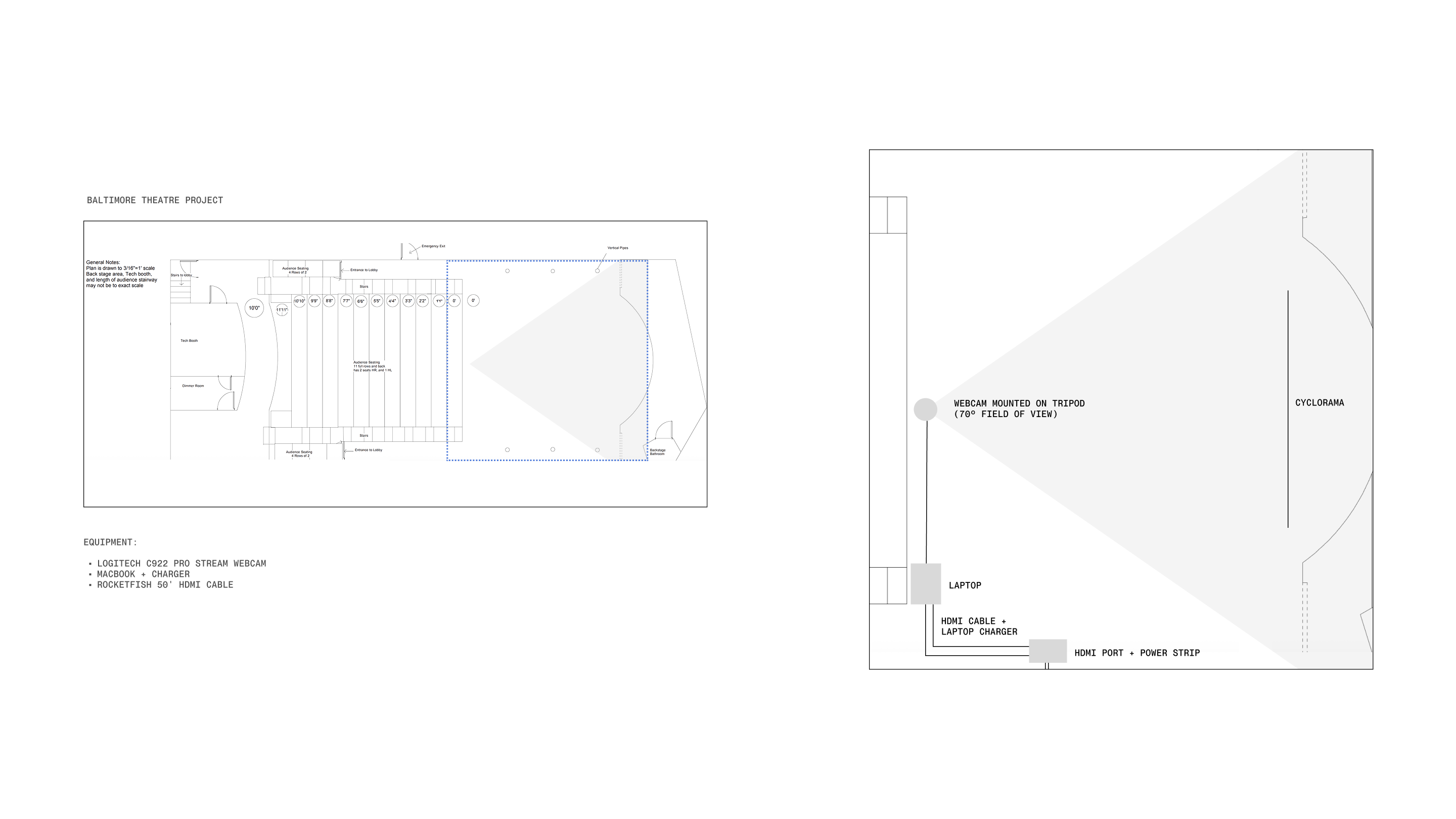

To reduce the surface area of potential error, we limited the equipment to a single laptop with a mirrored output to the projector. The scene management system was built using Three.js, chosen for its hardware efficiency. All non-interactive media was stored locally, as the venue's network was not reliable enough to depend on for live playback. Since the performance is partially improvised, the timing for each section is never the same, so I sat in the front row to queue scenes.

Limitations and Challenges

Since I only needed a few parameters to communicate the movement, it was not

necessary to use all of the joint data. Unfortunately, the MediaPipe library does not

support turning off certain joints. If I were to scale up the project, I would

need to create a custom model that only tracks the needed joints to improve

overall performance. Also, MediaPipe is best trained for waist-up poses

filmed on the webcam and tracks at most one person at a time. I anticipate

that when an improved model is released in the future, a lot of new

possibilities will open up.

Another challenging aspect of this project was actually making the interactive

visuals tell a story. One piece of advice that Golan gave me that helped

a lot was to think of particles as a substance that can be molded to

mimic natural phenomena, such as clouds, snow, or sand.

More Experiments

Credits

Special Thanks

I would like to thank Ceci Sun for inviting me to collaborate on this project, Golan Levin and Kyle McDonald for their mentorship, and Viviana Chen for making the time to test out the software in Pittsburgh. I would also like to thank my beautiful and intelligent classmates for believing in me and providing feedback to help me grow.

← Previous

Next →